Allen Conway

Exploring all things software engineering and beyond...

Tuesday, June 17, 2025

I'm Speaking! TrailBlazor Conference 2025

Wednesday, January 29, 2025

Check Out My YouTube Channel! 'The Eclectic Dev'

Come along with me and learn about the endless world of software engineering! I'll explore a wide variety of topics. Specializing primarily in web, .NET, and cloud related technologies, I'll journey these areas and beyond to many eclectic topics of interest to share knowledge with the wider global technology community.Check it out by clicking on the channel logo below, and please subscribe to stay tuned for a host of eclectic topics and as an extension of this blog which began over 17 years ago!

Wednesday, November 6, 2024

How to Nest Blazor's .razor Files in Visual Studio Code

When working with Blazor in Visual Studio Code, you may encounter some nuanced differences from working in Visual Studio, and would like greater feature parity. One such feature is the default file nesting as shown below from Visual Studio when it comes to Razor component files:

Visual Studio Code has the capability to nest files, but by default Blazor's files are not nested and appear in parallel.

To update/fix this, we can update the 'File Nesting Patterns' in the Command Palette.

Open the Command Palette in Visual Studio Code by pressing Ctrl+Shift+P (PC) and then add the following search string for direct access:

@id:explorer.fileNesting.patternsSelect 'Add Item' with the key:

*.razor${capture}.razor.cs, ${capture}.razor.css, ${capture}.razor.scss, ${capture}.razor.less, ${capture}.razor.js, ${capture}.razor.tsYou can add any applicable file extensions to the list above as needed. At this point you can close the settings and see the Blazor files nested correctly:

Thursday, May 23, 2024

How to Enable 'Hey Code!' Voice Interactivity for GitHub Copilot Chat

GitHub Copilot Chat 'Hey Code!' Configuration Steps

- Open the Command Palette via Ctrl+Shift+P or F1

- Type in 'accessibility' to access configuration options and select, 'Preferences: Open Accessibility Settings'

- Add 'voice' to the configuration filter and select 'Accessibility > Voice: Keyword Activation'

- Select an option for where Copilot Chat interacts with you after saying aloud, 'Hey Code!' in the IDE:

- chatInView: start a voice chat session in the chat view (i.e. the Copilot Chat main window)

- quickChat: start a voice chat session in the quick chat (i.e. Command Palette input)

- inlineChat: start a voice chat session in the active editor if possible (i.e. inline Copilot Chat dialog)

- chatInContext: start a voice chat session in the active editor or view depending on keyboard focus (i.e. if the current cursor is focused within code in a file, the inline Copilot Chat dialog is used, and if the active cursor is in the Copilot Chat main window this will be used to capture the dialog)

Tuesday, February 6, 2024

How to Fix the GitHub Copilot Chat Error: 'Cannot read properties of undefined (reading 'split')'

Sunday, November 12, 2023

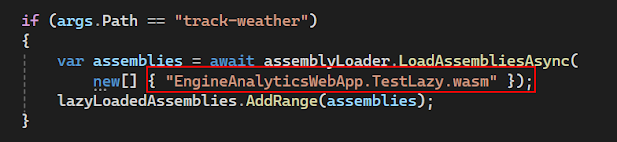

Blazor WebAssembly Lazy Loading Changes from .NET 7 to .NET 8

.csproj file updates

Unable to find 'EngineAnalyticsWebApp.TestLazy.dll' to be lazy loaded later. Confirm that project or package references are included and the reference is used in the project" error on build

Friday, November 3, 2023

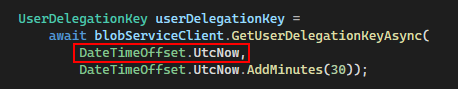

Dealing with Time Skew and SAS Azure Blob Token Generation: 403 Failed to Authenticate Error

Status 403: The request is not authorized to perform this operation using this permission

Here is a sample of code that might throw this error in which the startsOn value is set to the current time:

Before you start double checking all of the user permissions in Azure and going through all the Access Control and Role Assignments in Blob Storage (assuming you do have them configured correctly), this might be a red herring for a different issue with the startsOn value for the call to GetUserDelegationKeyAsync(). The problem is likely with clock skew and differences in times from the server and client.

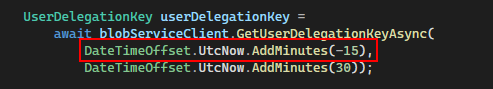

It is documented in SAS best practices from the Azure docs (found here), the following issue with clock skew and how to remedy the issue:

Be careful with SAS start time. If you set the start time for a SAS to the current time, failures might occur intermittently for the first few minutes. This is due to different machines having slightly different current times (known as clock skew). In general, set the start time to be at least 15 minutes in the past. Or, don't set it at all, which will make it valid immediately in all cases. The same generally applies to expiry time as well--remember that you may observe up to 15 minutes of clock skew in either direction on any request. For clients using a REST version prior to 2012-02-12, the maximum duration for a SAS that does not reference a stored access policy is 1 hour. Any policies that specify a longer term than 1 hour will fail.

There is a simple fix for this as instructed to modify the startsOn value to be either not set or 15 minutes (or greater) in the past. With this updated code, the 403 error is fixed and will proceed as expected.